Auto-Encoding Score Distribution Regression for Action Quality Assessment

https://arxiv.org/abs/2111.11029

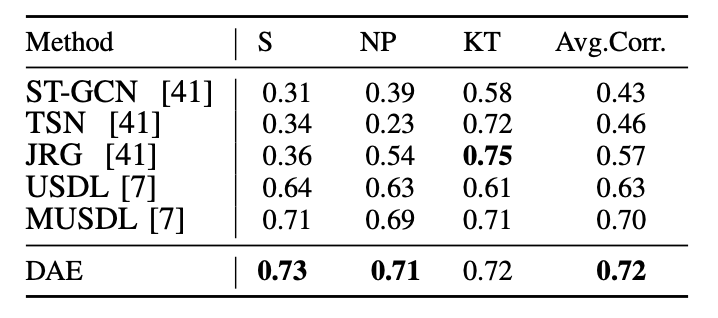

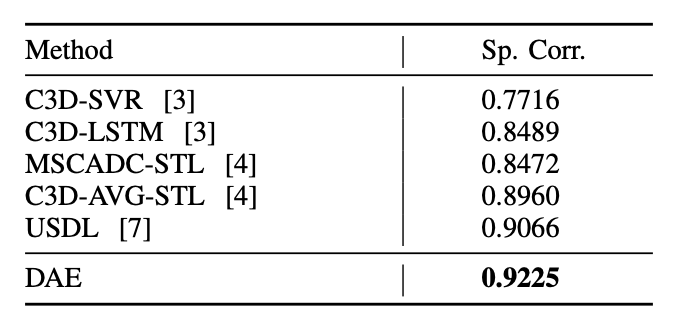

Action quality assessment (AQA) from videos is a challenging vision task since the relation between videos and action scores is difficult to model. Thus, action quality assessment has been widely studied in the literature. Traditionally, AQA task is treated as a regression problem to learn the underlying mappings between videos and action scores. More recently, the method of uncertainty score distribution learning (USDL) made success due to the introduction of label distribution learning (LDL). But USDL does not apply to dataset with continuous labels and needs a fixed variance in training. In this paper, to address the above problems, we further develop Distribution Auto-Encoder (DAE). DAE takes both advantages of regression algorithms and label distribution learning (LDL). Specifically, it encodes videos into distributions and uses the reparameterization trick in variational auto-encoders (VAE) to sample scores, which establishes a more accurate mapping between videos and scores. Meanwhile, a combined loss is constructed to accelerate the training of DAE. DAE-MT is further proposed to deal with AQA on multi-task datasets. We evaluate our DAE approach on MTL-AQA and JIGSAWS datasets. Experimental results on public datasets demonstrate that our method achieves state-of- the-arts under the Spearman’s Rank Correlation: 0.9449 on MTL-AQA and 0.73 on JIGSAWS.

AQA

Action Quality Accessment (AQA) automatically scores the quality of actions by analyzing features extracted from videos and images. It’s different from conventional action recognition problem. In the past few years, much work has been devoted to different AQA tasks, such as healthcare, sports video analysis and many others.

Related Work

Parmar et al. [1] proposed C3D-SVR and C3D-LSTM to predict the score of the Olympic events. Additionally, incremental-label training method was introduced to train the LSTM model based on the hypothesis that the final score is an aggregation of the sequential sub-action scores.

Tang et al. noticed the underlying ambiguity of action scores and then proposed an improved approach: uncertainty-aware score distribution learning (USDL) [2] to address this problem. USDL is designed based on label distribution learning (LDL), a general learning paradigm to solve problems with uncertainty and answer how much each label describes the instance.

Method:DAE

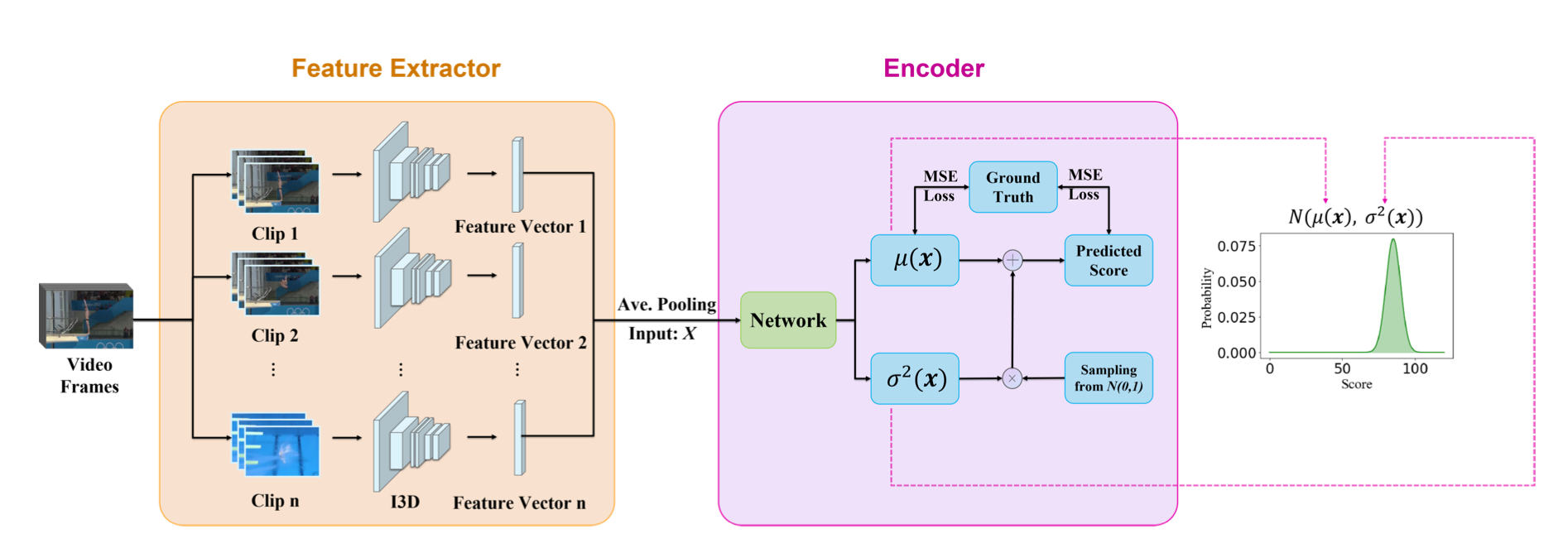

Video Feature Extraction

The input video is divided into n small clips by down-sampling. Then the clips are sent into I3D ConvNets for extracting features. The final features are synthesized by three fully-connected layers.

Auto-Encoder for Distribution Learning

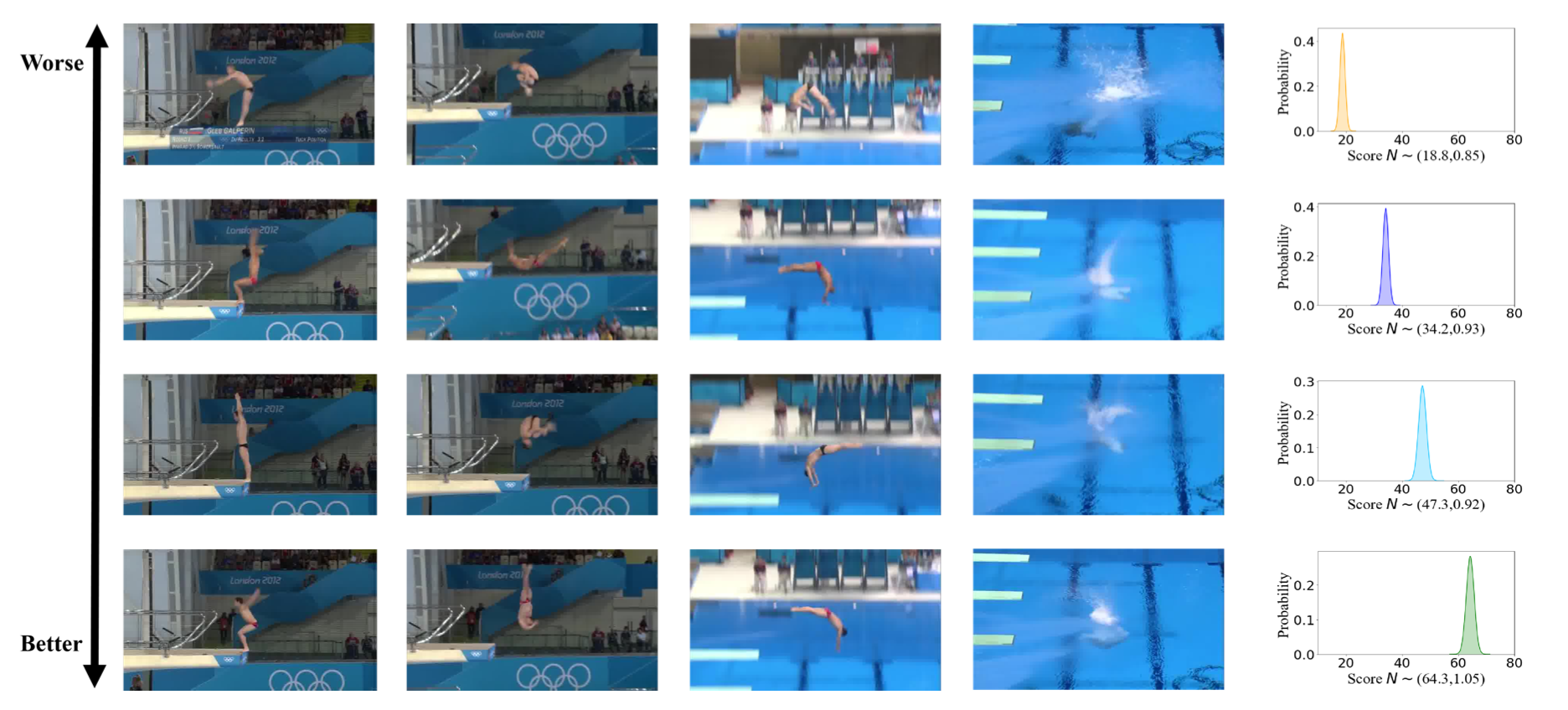

Compared with the regression-based method and the label distribution learning method, our approach combines the two methods’ characteristics comprehensively. The action features are encoded into score distribution, and the final result is sampled from the auto-encoders output. This architecture en- ables learning a continuous distribution without loss in training procedure and quantifies the uncertainty of action score with high accuracy.

The encoder uses a simple but quite an efficient neural network, namely multi-layered perceptrons (MLPs), to encode mean and variance simultaneously. The input 1024-dimensional feature vector x is encoded into the parameters \(μ(x)\) and \(σ^2(x)\) via a neural network.

We take the action score as a random variable. Treating the action score as a random variable, we need to learn its score distribution and then sample the predicted score from the obtained distribution. \[ p\left(y ; \mu(\boldsymbol{x}), \sigma^{2}(\boldsymbol{x})\right)=\frac{1}{\sqrt{2 \pi \sigma^{2}(\boldsymbol{x})}} \exp \left(-\frac{(y-\mu(\boldsymbol{x}))^{2}}{2 \sigma^{2}(\boldsymbol{x})}\right) \]

To generate a sample from Gaussian distributed y as the predicted score and make full use of the two parameters in the score distribution at the same time, we invoke the reparameterization trick. According to reparameterization trick in VAE [3], assume that \(z\) is a random variable, and \(z \sim q(z ; \phi), \phi\) is its parameter. We can express \(z\) as a deterministic variable, \(z=g(\epsilon ; \phi), \epsilon\) is an auxiliary variable with independent marginal \(p(\epsilon)\), and \(g(\cdot ; \phi)\) is a deterministic function parameterized by \(\phi\).

\[

y=\mu(\boldsymbol{x})+\epsilon * \sigma^{2}(\boldsymbol{x})

\]

Experiments

We use Spearman’s rank correlation to measure the performance of our methods between the ground-truth and predicted score series. Spearman’s correlation is defined as: \[

\rho=\frac{\sum_{i}\left(p_{i}-\bar{p}\right)\left(q_{i}-\bar{q}\right)}{\sqrt{\sum_{i}\left(p_{i}-\bar{p}\right)^{2} \sum_{i}\left(q_{i}-\bar{q}\right)^{2}}}

\]

References (incomplete)

[1] What and How Well You Performed? A Multitask Learning Approach to Action Quality Assessment

[2] Uncertainty-aware score distribution learning for action quality assessment

[3] Auto-encoding variational bayes

Cooperate with zby (seu) supervisor: xyf (seu)

Github link